entimICE FastTrack and Posit – The Ideal Couple for Clinical Research?

Photo © Shutterstock

The R programming language has become a cornerstone in clinical research due to its extensive range of statistical tools and graphical capabilities. Central to its flexibility and power is the comprehensive ecosystem of packages available through CRAN (Comprehensive R Archive Network), Tidyverse, Pharmaverse, Bioconductor and GitHub. However, managing these packages presents both significant challenges and notable benefits for researchers.

Posit (formerly known as RStudio) is a comprehensive integrated development environment (IDE) for R, offering a range of tools and features that significantly enhance R package management. Its benefits in the context of clinical research are particularly pronounced due to the complex and sensitive nature of the data and analyses involved.

entimICE FastTrack is an integrated Clinical Data Repository (CDR) and Statistical Computing Environment (SCE) platform, designed and built with decades of experience from both entimo and its industry customers. It provides effortless regulatory compliance, while enabling researchers to manage tasks and monitor progress towards successful submissions.

Bringing entimICE FastTrack and Posit together pairs two great systems, and helps researchers make the most of R’s powerful capabilities.

Challenges of using R

Because it is such a powerful tool, R can pose challenges for programmers and scientists who want to get the most out of it. Below are five common ones – read further to find out how Posit and entimICE FastTrack work together to help overcome them.

Version Control and Reproducibility

One of the most pressing challenges in clinical research is ensuring reproducibility. Because R packages are frequently updated, changes in functions or dependencies can lead to discrepancies in research results over time. Maintaining consistent versions of packages across different stages of research and among different collaborators is crucial, but can be difficult without rigorous version control practices.

Dependency Management

Many R packages rely on other packages, creating complex dependency chains. A single update in one package can potentially disrupt the functionality of others. This interdependency requires careful management to avoid conflicts and ensure that all necessary packages work harmoniously together.

Installation and Compatibility Issues

Installing and configuring R packages can be problematic, particularly when dealing with packages that require compilation or are dependent on system-specific libraries. These issues are amplified in clinical research environments where stringent IT policies and varying operating systems are common.

Documentation and Learning Curve

While the breadth of available packages is a strength, it also presents a learning curve. Not all packages are equally well-documented, and deciphering how to use a package correctly and effectively can be time-consuming. This is particularly important in clinical research, where the accuracy and reliability of analyses are paramount.

Regulatory Compliance

Clinical research is heavily regulated, requiring strict adherence to guidelines for data handling, analysis, and reporting. Ensuring that all R packages used are compliant with these regulations and that their usage is documented and validated is a significant challenge.

Benefits of using R with Posit

Adding the strengths of R to the extended possibilities offered by Posit offers numerous benefits to users in the pharma and research sector. Here are seven concrete advantages – and then keep reading to learn more about how these tools mesh with entimICE FastTrack.

Streamlined Package Installation and Updates - Posit Package Manager (PPM)

PPM provides one or more centralized repositories for R packages, which simplifies the process of finding and installing the correct versions of packages. It offers curated and vetted packages, ensuring that the packages are reliable and reducing the risk of installing malicious or buggy software. PPM supports versioned snapshots, allowing users to install packages as they existed on a specific date, which is crucial for reproducibility. Packages can be maintained within the repository, removing dependencies on external third parties (such as CRAN or GitHub).

Enhanced Reproducibility - Project Environments

Posit allows you to create project-specific libraries where each project can have its own set of packages and versions, avoiding conflicts between projects. Packrat and renv are integrated into Posit, facilitating the creation of isolated, reproducible environments. This ensures that analyses can be reliably reproduced by other researchers or at a later date.

Dependency Management and Tracking

Posit can automatically detect and install package dependencies, ensuring that all required packages and their dependencies are available and compatible. It provides tools to visualize and manage package dependencies, helping to identify and resolve conflicts easily.

User-Friendly and Intuitive Interface

Posit’s user-friendly interface includes features such as point-and-click package installation, updates, and management, making it accessible even for researchers who are not programming experts. Access to package documentation and vignettes directly within the Internal Development Environment helps researchers understand and use packages more effectively.

Enhanced Productivity Features

Posit provides advanced script management features, including syntax highlighting, code completion, and debugging tools, which enhance coding efficiency and reduce errors. For researchers developing their own packages, Posit offers robust development tools, including testing frameworks and build management, both of which streamline the development process.

Compliance and Security

PPM can restrict access to certain packages and versions, ensuring that only approved and compliant packages are used in clinical research settings. Posit provides audit trails for package installations and updates, which are essential for regulatory compliance in clinical research.

Community and Support Resources

Posit has a large and active user community that runs forums and discussion groups where researchers can seek help and share knowledge. Posit provides professional support services, which can be invaluable in troubleshooting complex package management issues and ensuring smooth operations in a clinical research environment.

Posit and entimICE FastTrack

Combining Posit and entimICE FastTrack brings together the strengths of both environments, with significant benefits for clinical data analysis and reporting.

Effortless Compliance

entimICE FastTrack ensures effortless compliance and end-to-end traceability for all artifacts, including data, programs, images, and large files such as biomarkers. Integrated version management tracks changes, while pre-configured workflows streamline validation and lifecycle management. Seamless integration with Posit keeps all data and program code changes updated in the repository.

Personalized Computing Environment

entimICE FastTrack's intuitive UI enables users to design custom dashboards and context-specific collections of objects of interest. Interactive visual reports track dependencies from raw data to submission-ready deliverables and monitor study progress. Users can complete all R- and Python-based programming tasks using Posit, which can provide a dedicated repository/execution environment per study to ensure compliance.

Shared or Individual Work Areas

entimICE FastTrack supports collaborative work on clinical data without the need for exporting snapshots or cumbersome check-out/check-in procedures. The data repository provides a single source of truth for all data processing steps. Additionally, users can establish individual work areas for exploratory work.

Ready for Exploratory and Submission Workflows

The system supports both exploratory data analysis using a variety of statistical tools, including Posit, and generating submission-ready deliverables through tightly integrated R functionalities. entimICE FastTrack offers clinical data scientists the freedom to explore where appropriate, alongside strict guidance and conformance where necessary.

Language Agnostic

entimICE FastTrack is truly language-agnostic. While fully supporting R and Python through Posit integration, the system also accommodates other toolsets, including industry-standard SAS, its programming language along with tools like Enterprise Guide or SAS Studio. Integration with SAS Viya is currently in development.

Pairing Up

R package management is a double-edged sword in clinical research. The challenges including version control, dependency management, installation issues, documentation, and regulatory compliance make meticulous planning and management necessary. However, the benefits such as enhanced analytical capabilities, reproducibility and efficiency make R an invaluable asset in clinical research. Effective package management practices are essential to leveraging R's full potential while mitigating the inherent challenges, ultimately contributing to the advancement of clinical research.

Using Posit for R package management in clinical research offers numerous benefits. These include streamlined package installation and updates, enhanced reproducibility, effective dependency management, and a user-friendly interface. Additionally, Posit fosters collaboration, enhances productivity, and provides extensive community and professional support. These features make Posit an invaluable tool for managing R packages, ultimately contributing to more reliable and efficient clinical research practices.

The integration of Posit with entimICE FastTrack allows data scientists and biostatisticians in highly regulated environments to enjoy the best of both worlds: They gain the freedom to use a large variety of R packages for exploratory analysis, while ensuring full compliance during submission runs using an exclusive validated R environment. This powerful combination of two tools significantly enhances clinical data science, leading to faster and more successful submissions.

- Details

- Written by: entimo Marketing

Data Storage Evolves

Increasing volumes and changing types of data require new approaches.

Considerations of cost and ownership are encouraging companies in pharmaceutical research to separate their data storage from their computation needs. The widespread perception that storage is inexpensive and easily extensible compared to computing power is not necessarily correct. The real deal is that organizations should scale storage and computing separately, as they do not always rise in tandem, and thus the separation makes sense to better provide for savings. Looking at a situation more closely reveals that ownership of data used in analysis and computational processes may be more complex. A computational environment might not be considered the owner of the data it uses, and so that data is stored elsewhere. Similarly, data silos within organizations may be set up such that data cannot be used for downstream processes, so that exports or copies have to be created to perform the computations.

Clinical data used to be more homogeneous.

Another development affecting how organizations evaluate storage and computation is the changing nature of clinical data that are gathered in the course of trials. In previous years, clinical data was primarily stored as SAS datasets natively in file systems, along with additional items derived from or working with those datasets, items such as documents, programs, output, and logs. As data volumes have grown—thanks to more studies, bigger studies, more types of data used in studies, as well as keeping data for secondary use in the active systems—the industry standard has shifted away from clinical/tabular data and toward heterogeneous collections such as x-omics data, biomarker, and healthcare data from wearables. The need for version control and audit trails imposes new and different storage requirements on these kinds of collections, in contrast to SAS datasets in a file system, where one of the main challenges was rapid availability stemming from the need for rapid input-output when working with SAS.

The desire for end-to-end traceability along with regulatory requirements have led entimo, in both its products entimICE DARE and entimICE FastTrack, to follow the principle of ownership of the data in their repository. This applies to all metadata, and also to data, which is mostly held in a central file system. Following an evolutionary path, entimICE FastTrack is designed to offer more flexibility and connectivity for external storage. Within entimICE DARE, there is still an option to store clinical data in a database, with entimICE retaining ownership.

As the size and nature of data gathered during clinical trials expand and change, the tools needed to analyze and understand that data will are also evolving. Consider the example of a trial sponsor that decides to build clinical data storage for all data that comes in from EDC systems and other sources. While the storage is organized in a fashion specific to the company, it uses approaches that are well understood. Further, the sponsor needs all other tools to interact with the data in this cluster.

Engineers are working to ensure that entimICE FastTrack can adapt to this new environment by projecting data from the storage into its own repository, following a proxy concept and other comparable methods. The software only projects relevant data, and is able to track changes on the projected data as well as mirroring this information in its own traceability records. This capability is important for keeping information needed for compliance all within entimICE FastTrack.

Using a clinical repository approach, entimICE FastTrack can have full ownership and control on dedicated production life cycle areas in the external data. This guarantees the control for production runs within entimICE, where greater oversight of specific workflows is necessary to guarantee regulatory compliance. The file repository as well as the data projection remain transparent for end users. For example, in a study’s development lifecycle area, programs from the file system and datasets from the projection can appear side by side, and can both be accessed and used.

Contemporary data is more heterogeneous.

The combination of these approaches enables entimICE Fast Track to evolve with the changes in how organizations throughout the drug development process are addressing increasing amounts of data not only in increasingly heterogeneous formats but also data sources outside of entimICE.

- Details

- Written by: entimo Staff

Inevitable Choices

“Concurrency is everywhere in modern programming, whether we like it or not” reads an MIT introduction to the subject. In a typical computing environment, machines and software will be contending with concurrency on numerous levels: from multiple processors on the same chip through multiple pieces of software running at a single workstation up to multiple—sometimes multitudes—computers running on one network.

Developers use techniques such as separate processes, threads, time-slicing, shared memory, message-passing and more to avoid problems such as race conditions, where the outcome of a process is not always the same, depending on uncertain order in which computations are executed.

For a Statistical Computing Environment in a carefully regulated sector like pharma development, keeping track of concurrent operations is crucial. Regulatory submissions, and ultimately the safety of new medications, will depend on the integrity of the data being submitted. That, in turn, depends on how well the software can handle demands from many different users.

“Concurrency is all over the place, no matter how you look at it,” says Alexander Lüders, a senior software engineer at entimo. “You can work with a single shared repository, or you can check out sections of data into a sandbox, but the issue remains because you eventually have to merge the checkout back to the repository.“

As a result, entimo has chosen to work directly with the questions of concurrency. “A sandbox still has the problem of how to resolve conflicts,” adds Marc Jantke, one of entimo’s board members. “You can have situations where more than one user wants to check something back into the master branch, and what do you do then? You still have to work through that question.”

Jantke points to other challenges for systems that involve check-outs and redundant copies. First, making and keeping all of them consumes resources and time, especially in environments where validation may require retaining an entire audit trail of data versions. Further, the data volumes for clinical studies may be large enough that a check-out approach in the computing environment could slow down performance. If an organization had infinite resources, that would not be an issue, but every company faces some kinds of limits, and a sandbox/check-out architecture may reach them more quickly than an approach that works with concurrency directly. Second, the computing required to accommodate check-outs can become a performance question, particularly given the size and relationships in data volumes in modern pharmaceutical studies. Again, this is less of a theoretical problem and more of a practical one, given that even the largest development programs have to work within budgets. “Those are non-trivial challenges,” says Jantke.

“A check-out approach faces the problem of making a consistent copy. That can lead to a lot of blockages for other users or would force us to have everything under version control,” adds Lüders. On a large study or in an analysis involving many users, that could slow such a system significantly.

In short, you can’t get around concurrency, so you might as well deal with it directly. That’s the business case entimo has made, and it has proven to work as our customers are able to collaborate efficiently even with hundreds of users working concurrently on a shared repository.

- Details

- Written by: Douglas Merrill

Massive Gain in Efficiency

By Alexander Lueders, Douglas Merrill, Vegard Stenstad and Henrik Steudel

Product development team members at entimo combined process optimizations to generate a 27-fold increase in efficiency. Find out how.

A key feature in entimICE is traceability which, among other things, keeps track of which files have been created or updated by a program run. (A program in this context is an end-user business program such as SAS/R, or an entimICE script. The program may have many different versions, with each version reflecting a change made to its source or its parameters.) When a user runs a program, entimICE creates a program execution for the current program version. This execution contains information about which objects have been read or written or deleted. Usually the objects that the program uses are files, but entimICE also keeps track of macro calls and database view usages.

The challenge

One of the entimICE software development teams at entimo had a task to create a tool that would migrate data from an old relational data model to a new document-based data model for a large (1 TB or more) volume of data. Further, the tool needed to be able to validate that all of the data had been transferred from the old system to the new one.

“A program execution trace database is very complex,” says Vegard Stenstad, who led the effort. “There are nested executions, as well as used objects and conflicting executions.” Further, the tool had to be able to stop and resume during the migration. It needed to work as quickly as possible.

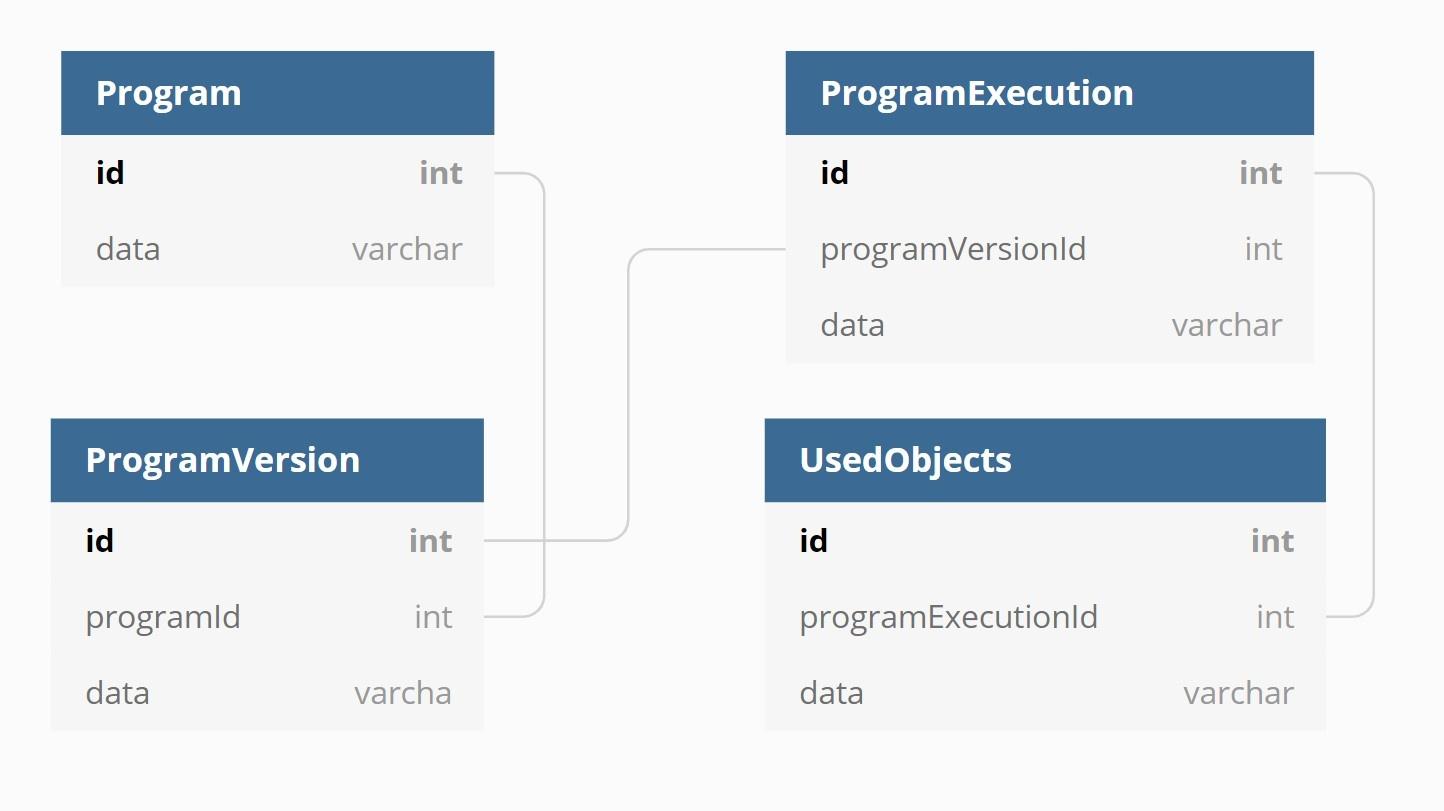

Understanding the tool requires looking at the use case and the existing database structure. When an entimICE user runs a program in an area where tracing is enabled, the application records everything that the program does. That includes recording information about the program itself, any files used for input or output, log files, database connections, and more. This data is then stored in a relational database (Oracle).

In a production environment, this relational database can obviously grow very large over time. Further, modern environments produce data in numerous different structures. The program execution trace under consideration here distributes data across multiple independent data stores and works with varied data structures. In this case, the team chose MongoDB because its document-based model is a better fit for execution trace records than a relational database. The migration achieves two important goals; it reduces volume and load on the central classic Oracle database, and at the same time it benefits from MongoDB’s built-in clustering capabilities.

Fig. 1

A very simplified view of the relational database

Building the tool

One of the target use cases for the tool was a set of roughly five million program executions. Those executions involved an average of 43 objects, so that led to as many as 550 million lookups in the complete task. These significant numbers meant that the team not only had to find a way to do the migration, the team members had to find a way to get the task to run within an amount of time that met business and operational requirements.

The tool accomplishes its goal in five steps:

- Reading the data from the old database

- Cleaning the data

- Transforming the data

- Persisting the data

- Validating the migrated data

The last step, validating the data, is crucial because it ensures that the integrity of the data has been kept. Because of the importance of validating data and data integrity, those were the team’s initial priorities and performance took a back seat. As a result, the first implementation was slow: it took up to 14 days to manage a complete migration of around 1 TB of data.

Each step toward the goal had a corresponding part in the tool. First, a pre-processor initialized the conditions that the tool required. The reader and chunk iterator dealt with organizing and reading chunks of data from Oracle, as well as stopping and starting. The transformer did the actual work of transforming the data. The persister was initially not separate, but was split out during the process of optimization. It stored the data to the new database. The validator ensured that all data was transferred correctly.

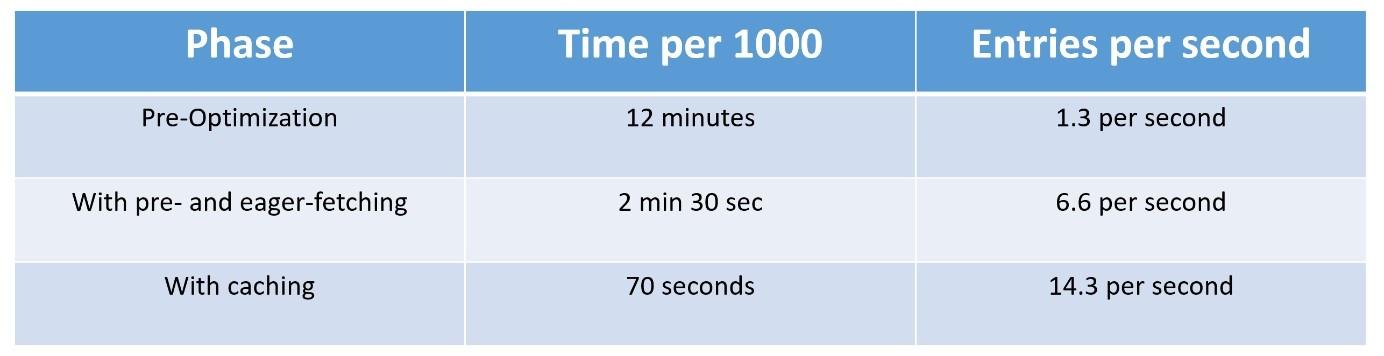

Fig 2.

Improvements gained by optimization

How does the tool work?

To migrate the data, the team initially built a multi-threaded tool using an iterative chunking approach to the data, and in which each thread is independent, handling its own reading, processing, and writing. There was a synchronized singleton that coordinated among the threads, and distributed the chunks of data to the process.

The team divided the tool into five parts: a pre-processor, a combined reader and “chunk iterator,” a transformer, a persister, and a validator. The pre-processor initialized the conditions that the tool required. The reader and chunk iterator dealt with organizing and reading chunks of data from Oracle, as well as stopping and starting. The transformer did the actual work of transforming the data. The persister was initially not separate, but was split out during the process of optimization. It stored the data to the new database. The validator ensured that all data was transferred correctly.

Why was it important to have a pre-processor? Within entimICE, nested executions could lead to a situation in which some executions could be deleted, so developers made a design choice to clone executions. This approach led to duplications, which should be ignored in a migration. The pre-processor was built to identify all of the clones, leading to a list of top-level executions for the migration, which thus picks up all of the nested executions as well. The pre-processor creates an “ignore list” of the clones, which do not require migration.

The “chunk iterator” was a synchronized singleton that provides each thread with a list of executions to process in chunks of 1000 executions. The chunks were stored in MongoDB, and any errors were noted. When the tool was started, it recorded and stored a max execution ID, so that it would not process any data that was added after the start of the tool. The reader was an extremely simple part of the tool, just requesting chunks from the chunk iterator and calling the transformer to process the chunk.

The transformer translated old entities to new entities; that is, Oracle execution traces were transformed into MongoDB data execution traces. Along the way, it improved the handling of program execution data by removing irrelevant objects, correcting program versions, and so forth. Upon completion of the translation, it added the processed chunk of 1000 executions to the persist thread queue.

The persister was originally part of the transformer, but during optimization the team moved it to its own thread. It persisted data to the MongoDB and marked chunks as completed. It was a very simple and reusable tool, one that used a blocking queue to receive from the processing threads. As long as there was data in the queue and the readers were going, it would keep looping and whenever it found something it would write that to MongoDB.

The final part of the tool was the validator, which was run after the migration was completed. It built a list of program executions in Oracle that should have been transferred to MongoDB and compared that list against what was actually written to MongoDB.

Improving performance

The team found ways to enhance the tool, and wound up improving its performance massively. They employed five major techniques to optimize:

- Increasing JDBC driver fetch size

- Bulk writing of data to the new document database

- Pre-fetching data & using entity graphs when possible to avoid lazy loading

- Pre-loading commonly used lookup tables

- Caching of commonly used data

The first optimization concerned the ignore list that the pre-processor was building. That list initially took almost four hours to generate. Delving into the parameters of the processes involved, the team found that the default query fetch size for Oracle was 10, so the pre-processor was going back and forth an immense number of times to fetch small queries. They re-set the size to 100,000. After that change, the ignore list took less than five minutes to generate. That near fifty-fold improvement in the pre-processing had a medium-sized effect on the overall task. The team gained some efficiencies in the validator because it also queried Oracle directly, so setting the fetch size higher improved performance in validation as well.

After the fetch size was increased, writing to MongoDB became the bottleneck for generating the ignore list. The tool was sending what to write as a task list, but the actual writing was still happening one by one. Changing the method from “saveAll” to “insert” forced bulk writing, which removed writing speed as a constraint. As part of this second optimization, the team also moved persisting to its own thread. When combined with bulk writing, time required for persisting took dropped from 90-120 seconds down to 2-6, depending on the chunk size. (The times discussed in this article are all related to the test hardware and could be changed by using production hardware. The conclusions for the configuration and software usage remain valid, and the points about optimization can be generalized beyond any particular configuration.) The team decided this was sufficiently efficient. These optimizations together also had a medium-sized effect on overall performance.

The third optimization turned out to be a game-changer for the team. In the original design, the tool looped over program executions and loaded them one by one, also loading any nested executions. The team changed to bulk loading parent executions along with the first level of nested executions. “Fetching them for up to 1000 executions at the same time, which is a maximum set by a limit within Oracle, we also introduced EntityGraph, which tells part of the Oracle software that it should pre-fetch child entities and grandchild entities when it is loading a program execution,” explains Alexander Lueders, who was part of the team that developed the tool. Memory management became a problem with the pre-fetching, so the team reduced the size of each chunk. Those changes in design reduced migration time from 14 days to four days. The team had moved the tool much closer to the performance needed for practical use. “That was a huge impact,” adds Alexander.

The fourth optimization involved pre-loading data. The team introduced pre-loading because when the tool fetched parent and child executions, it wound up getting clones. The solution was to find the original and pre-load that execution. Taking this approach also reduced the query to only the data they needed and not the entire entity, so the tool could afford to store it in memory. The data was pre-loaded during initialization and a map was built in memory. Building the map took about 30 second, and saved millions of queries. This was cleaner and more efficient, but with a minor effect on overall performance.

The fifth optimization brought another big jump in performance. “We had two lookups – before and after the execution – for the version of for every object that was used in an execution,” explains Henrik Steudel, another team member. “With an average of 43 objects per execution that added up to a total of 550 million lookups for our database of 5 million executions, and because of the massive amount of versions pre-caching was not feasible.” The team chose to use Caffeine Cache with Spring to implement a caching strategy. The lookup time for each one dropped from 1.6ms to 0.3ms, a five-fold improvement. Those accumulated gains dropped the migration time from four days to half a day. The tool was now processing about as fast as it could write to MongoDB.

With its process of optimization, the team improved performance by a factor of roughly 27, going from 14 days for a migration to 12 hours.

Conclusions

What are the general lessons learned for optimizing and improving performance?

Understanding the data and data structures is key to optimizing. “Be sure to know the settings and limitations in the underlying technology,” says Vegard. “In this case, key parameters turned out to be the Oracle fetch size, IN query limit, and MongoDB saveAll vs insert.”

Using the right tool for the situation and combining multiple optimizations led to huge benefits. Analyzing required entity data that was needed for loading revealed ways to reduce overhead. Optimization can be a trial and error process that takes time.

Working on real data demonstrated how large datasets that grow over time tend to accumulate anomalies that have to be considered during migration. There’s nothing like real-world data to bring out corner cases.

By the end of the process, the team had delivered a migration tool that was showing significant improvement from its first iteration. The previous system could be migrated to an underlying MongoDB system without affecting the rest of the entimICE system, gaining significant performance improvements in the overall system and ensuring compatibility with future updates or service packs.

* Upper image by Wikimedia user Famberhorst under a Creative Commons Attribution-Share Alike 4.0 International license. Lower image released to the public domain by its creator.

- Details

- Written by: Douglas Merrill

The Most Colorful Peacock

How does the CEO of a respectable IT company end up writing about birds, fairy tales and greek mythology in his very first company blog article?

I will take you to the answer of this question, but we have to start at the beginning.

About feathers and ambitions

entimo is providing software products and professional services to the pharmaceutical industry since its beginning back in 2003. Our products are used in the areas of Statistical Computing, Clinical Data Management and Standards Management by some of the top 10 pharma and many other small to medium sized companies around the world.

How can a company with 50 employees serve the global leaders in their core business processes?

The answer is simple. entimo is a reliable business partner to all customers. Our relations are lasting for decades and are based on a solid foundation of trust. As the saying goes trust is something you earn and not something that is given. entimo is ambitious about constantly improving towards perfection. This applies to our processes, quality, transparency and our own culture. That may be one of the reasons which earned us trust from our employees. Many of them have been with us right from the start, many more joined us during the journey and most of them stayed with us until today. The consistency in our teams, the agility in our minds and the ambitions in our goals seem to have earned us the trust of our customers as well.

And what about the feathers?

We are in the middle of developing the next evolution of our products and it will for sure revolutionize the market. It has the power to change the vision of the pharmaceutical research and development process, away from individual and monolithic software products with clunky interfaces towards a holistic ecosystem that puts the clinical data and the people who work with it in the center.

entimo is looking for additional ways to bring interested parties closer together to involve you into the development of our future. This is where the idea of this blog comes in, with the goal to establish a new communication channel between our company, its customers and any other interested party in the life sciences industry.. The thing with blogs is: they have to be attractive in order to be read.

So if you have never written a blog before and are striving for the creation of a perfect blog in order to attract your readers, you start reading blogs. Then you read blogs about blogging and you end up consuming questionable expertise about how a perfect blog should look like. You learn a lot about golden rules, do's and don'ts and the importance of titles. Finally you stumble across advice on how to find the perfect title for your blog posts and they tell you that the most colorful peacock draws the most attention.

And here we are now in the middle of this article and you followed along because the colorful peacock drew your attention. But there is more to discover.

About the ugly duckling

Catching someone's attention with an attractive title is not enough to make a blog post worthwhile. It should tell you something of interest, inspire you or at least be entertaining. At best, all of the above.

Something inspiring

Now entimo is taking its first steps in the world of blogs. Thinking of a company full of software developers with lots of coding, coffee and pizza, certainly does not strike the mind with the picture of a colorful peacock. You might have rather a flock of pigeons or sparrows in mind.

The analogy that came to my mind, was "The Ugly Duckling", a fairy tale from the Danish author Hans Christian Andersen first published in 1843. It is a heart-warming story about a little bird, raised by a duck but rejected from siblings and other animals because it is such an ugly duckling. After a winter of loneliness and despair, the little ugly duck has grown but still feels miserable and tries to end its life by getting killed by a flock of beautiful swans. To its surprise the swans take the duckling in, as one of them and the bird finally realizes it has been a swan itself all the time. The fairy tale ends with the swan flying away with its new family.

We are the swan

How does this relate to a software company getting familiarized with the approach of writing a blog? One might argue, writing software and writing blogs is technically close by and should be a natural fit. Having done both myself now, I can tell you: It is not. This feels like comparing a bookbinder with a poet. Nonetheless, there is a deeper truth in it, as we at entimo have stories to share with you and those stories come from our professional lives in a software company. So, in the greater sense are we the swan of this story, recognizing at the end that we have been bloggers for a long time, but simply never realized this and thus never lived up to it, sharing the stories which could have been shared long ago.

About phoenix and the ashes

Until this point I have been talking about the most colorful peacock and the ugly duckling and explained how these birds relate to entimo as a company, entering new ways to get engaged more closely with our customers. And if you think, this is enough of questionable analogies between birds and software specialists... there is one more:

We are the phoenix

This bird from the ancient Greek mythology that was told to die in flames and combustion just to be reborn from its own ashes, has made its appearance in all kinds of art over all times.

Shakespeare wrote about it in Henry VIII, as well as J. K. Rowling in Harry Potter. Queen honored the bird in their band logo and Conchita Wurst won the Eurovision Song Contest 2014 with the song "Rise Like a Phoenix". And even modern computer games like Starcraft 2 and DOTA 2 make use of this mythological figure.

The phoenix appears often as symbol of renewal and reinvention. And this applies to entimo just as much. With the switch to agile methodologies a few years ago, we adopted a mindset that constantly strives for renewal and reinvention in order to keep the wheels of improvement turning. The development of our future platform as a natural evolution of our existing products is just another example for this.

The rebirth of the phoenix is associated with an end and a new beginning, giving room for new ideas, making things better than yesterday. This is the path to remain successful in any business and our customers are adopting this philosophy just as we are.

And this is it. When you want to hook people on your blog, find catchy titles, appealing pictures and a worthwhile topic. Fill it with some random facts and the information you really want to share. Of course, all of this has to be polished by a conclusive summary and a call to action.

TL;DR

Our goal for the first entimo blog article is not to teach you a lesson about birds. It is to share some insights about who we are as a company and where we would like to go in the future. And most importantly, how we hope that this blog can help us to take you along on this journey.

If you are interested in reading more about what we are doing here at entimo, besides ornithology, you are invited to drop a comment and make suggestions for any future topics you would like to hear more about.

Less bird-oriented proposals are most welcome.

May the upcurrents carry your safely through these stormy times,

Marc Jantke (CEO)

- Details

- Written by: Marc Jantke